I was casually browsing YouTube the other day when I got this video recommended to me. In my usual nature, I put it in my watch later list and forgot about it for 6 months. I finally came around to watching it and I was pleasantly surprised.

Evan lays out a pretty interesting idea for a new UI paradigm that he calls UI2 (Unified Intent Interface). You’ll see what this means in a bit, but here is the gestalt: In the UI2 framework, the user expresses what they want in natural language, and the system maps that intent into actions inside the app.

The problem with current UI

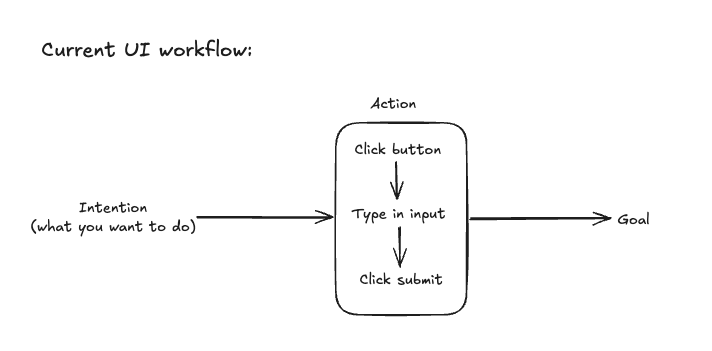

The problem is that there are too many steps in the UI workflow. So for example, you want to send a message so you open the Messages app, you click on someone’s name, you type in some input and then you click send. That sounds trivial, but his point is that the interface inserts an extra layer between what you want and what the computer does. Traditional UIs ask users to learn the grammar of the software: which screen to open, which button to press, which field to fill out, and in what order.

UI2’s claim is that AI can compress that action layer by turning natural language directly into application behaviour. Evan summarizes the older model as: you have an intention, you translate it into actions, and then you perform those actions on the UI.

Evan proposes that there’s too much friction when the user translates their intention into actions to take on the UI, and proposes that AI could replace the action layer instead.

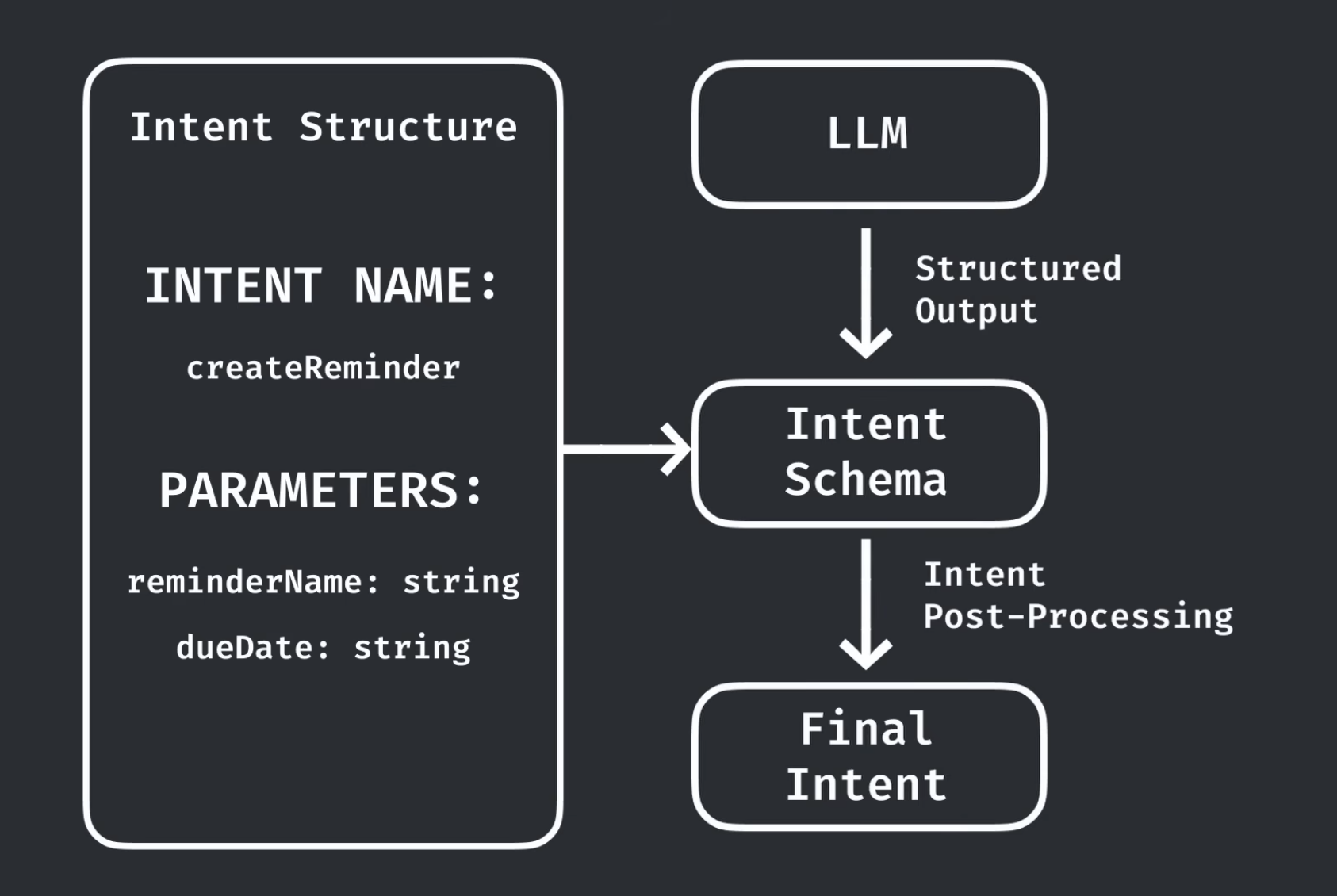

UI2 works by sitting between the user’s intent and the app’s actual functionality. Rather than forcing you to navigate a UI step by step, it lets you describe what you want in natural language, then uses an LLM to translate that into a structured intent the app can act on. That intent is passed through a schema and some post-processing, then turned into a final action or preview. So instead of learning the app’s buttons and workflows, you interact at the level of intention, and the software handles the translation for you.

The video explains this a lot better than I can in text, so please give it a watch.

What makes UI2 Interesting

I thought it was a very cool piece with interesting ideas and the more I thought about it, the more I started to feel like this whole interface was vaguely familiar.

This UI2 framework kinda turns your device into a CLI (but not really). It reminds me a little bit of a command palette, a little bit of a CLI, and a little bit of function calling. But it is not exactly any of those. A CLI still expects you to know the command vocabulary. A command palette still usually maps to discrete pre-defined actions. UI2 is more ambitious: it tries to let natural language become the primary interface layer while still grounding that language in a structured set of application intents. Evan explicitly describes it as merging different app intents into a single natural-language interface.

The value of this framework comes in 2 parts:

- The ability to stack hardcoded tasks. You no longer need to know exactly where the feature lives or which sequence of controls triggers it.

- A tight feedback loop via real time responses

I believe the ability to stack those hardcoded tasks is already taken care of by Apple Intelligence, so the value prop for this library is the real time nature of it all. You are forced to verify the result rather than just pressing send and forgetting like in current AI-app UIs. I think it’s a pretty interesting idea that would work exceptionally well in some cases.

My take

I think we’ll see way more apps include an AI-powered command palette which just maps your natural language input to the actions available in the app. It seems to me that this is how natural language will come to drive UIs.